Among other insights, Hall highlights why AI-based tools such as ToxMod add significant valuable in a Live Ops gaming context

Everyone is in visitor services when you work at a zoo. I think taking that kind of a mentality and applying it to player support, which has a very strong customer service streak to it as well, it’s like, “Let’s make sure that our players are supported.” But it [player support in a video game studio context] differs from customer support in that you’re taking all that information and potentially working with product managers to build a roadmap of what might enter development in the future.—Laura Hall

Laura Hall, a Senior Player Support Specialist at Schell Games in Pittsburgh, PA, had one of the more unlikely career paths into gaming: Zoo communications → Video game production.

That’s not a typo.

“My undergrad degree was in media arts,” Hall told to Player Driven host Greg Posner in a December 2025 discussion that was published in January. After stints in broadcasting and in communications jobs, Hall was hired as “Communications Manager for the Pittsburgh Zoo. And it was a blast. As you might imagine, I’m a huge animal lover. So much fun!”

“What does a Communications Manager at a zoo do?” Hall asked rhetorically. “I managed our commercial production and video production, our website. We got a really cool grant to make an app and a location-based experience. We talked to a bunch of different studios and we ended up working with a studio called Schell Games.”

“For two and a half years, from the zoo’s perspective,” Hall continued, “I project managed the creation of an app…that you could [open]…and say, ‘Oh, I can identify that lion.’ It had all the names of the animals in it. Facts about how old are they are, and then general information about whatever animal you’re talking about. There were also mini games throughout the park” if you used the app at the zoo.

“I worked with a very talented team at Schell to develop this app. As we closed on the production of the app...I still loved my position at the zoo, but Shell Games had an opportunity as a producer opening up.”

“While I’d been working I also got a master’s degree in organizational leadership,” Hall explained. “A lot of those things where I was at the zoo…I’m rallying a group of keepers and administrators and people who I would work with to write newsletter articles and things like that…How do you make sure that everything that’s happening—whether you’re developing an app or a commercial—it’s happening on a schedule, on budget, on time?”

“I hadn’t worked in game development per se, but I knew enough about that process, and my sister is a software engineer, so I knew a lot about development from her. I thought, ‘I’m gonna take a leap here and give it a try…’”

Hall hasn’t regretted the leap.

“I absolutely love game development and working in a studio and being a part of that process, and now advocating for players.”

Hall made that jump eight years ago. Her first four years at Schell were spent in production. Since then, she’s effectively blazed her own trail in her current Player Support Specialist role.

“It’s a position that we didn’t previously have,” Hall told Posner.

Schell Games, Hall elaborated, offers “some internal IP projects. The I Expect You to Die series. We’ve created Until You Fall. We just launched Project Freefall, which is in early access on Steam. We’ve created Among Us 3D, previously Among Us VR. We’ve done all kinds of internal IP, as well as a fair amount of client work,” which relates back to her job at the Pittsburgh Zoo.

In case you’re interested, the Schell Games portfolio is summarized here.

Something that distinguishes the company, Hall told Posner, is that “we’ve done a lot of VR-specific games. The VR experiences are really cool.”

“You might be a mobile gamer. You might have a console. VR gamers, I think, are a specific breed. At Schell, we’ve gotten pretty darn good at making VR experiences. It’s been an awesome experience, especially with things like Among Us VR, Among Us 3D.”

“Among Us 3D is available both on Steam for non-VR players and on Steam [Index], PlayStation, Meta, and Pico, for VR players,” Hall said.

“We worked very closely with Innersloth to create what was originally Among Us VR. You’re playing in a 3D space, you’re in a VR headset, and you’re playing that that iconic gameplay.”

“We got a lot of feedback from our player community that said, ‘Hey, I don’t have a VR headset, but this looks so cool. And I love Among Us, and I really want to try this out.’ So, we thought, ‘Maybe we could take this experience and bring it to PC so that so the folks without a VR headset could still play this experience.’ In May 2025 we launched Among Us 3D.”

Hall’s player support role is on the product team, not customer service. She works more closely with engineers as a result.

Schell’s QA team, according to Hall, finds “a lot of good stuff, but sometimes when things are out there in the wild, and they’re [players are] behaving differently, because they’re…playing on machines in a different time zone or with a different format, they can find new stuff. We want to make sure that we have an ear to the ground to discover new issues. I think it’s really important to never discount anything that someone is reporting…”

Occasionally, Hall needs to pull aside someone in QA or engineering and say, “I want to respect what we already have on the product roadmap, but here’s something that came up. I have to, as player support, care about this because I’m the voice of the players internally at the studio.”

The soft skills she honed at the zoo come in handy in these situations.

“Whether you’re talking to a veterinarian or someone who works at the front gates,” Hall told Posner, “those soft skills are universally applicable the same way. Whether you’re talking to an engineer or you’re talking to a designer or you’re talking to an artist or a client, it’s really just being able to work with people, being kind and affable and empathetic.”

Posner shifted gears and asked Hall what game she’d recently played (that wasn’t related to her day job).

“I have an eight, a five, and a three-year-old at home,” Hall responded. “We love our Nintendo Switch.”

“I think it was [Cranky Watermelon’s] Boomerang Fu. I’m always the pineapple. My son’s always the boba tea.”

Posner countered that he’s been enjoying MARVEL Cosmic Invasion with his seven-year-old boy.

Hall not only knew the game, she said her household has rocked the game’s four-controller couch co-op mode.

Hall’s three-year-old daughter is “on my team because we have two boys and a girl. We’re always She-Hulk and Phoenix. She tells me when to switch between the characters...”

“Games can be so wonderful in a way that I think is exclusive to gaming. You can bond with other people. You can have these shared experiences” that are unlike watching a movie or TV show together, she added.

Tru dat.

Read on to get Hall’s perspectives on:

Key elements in Schell’s player support framework

Support systems related to reporting players and protecting minors

Why AI tools are making rapid inroads in player support

Don’t forget to sign up for our newsletter if you haven’t already and share this profile if you enjoy it!

Player Support System Building Blocks

When I started in player support, it was first, like, “Okay, let’s come up with a pipeline to make sure that we can talk with our players. Let’s make sure that when they bring something to our attention, it is both responded to and documented somewhere, and then taken back to development teams so that we can we can properly discern if there is something here that needs some action.” So that that was a big initiative.—Laura Hall

When Schell Games’ Senior Player Support Specialist Laura Hall started in her current position, she told Player Driven’s host Greg Posner late last year, “We didn’t have a player support department at that time. When the opportunity came to build something from the ground up like this…”

Hall backed up a step.

“We were trying really hard. But we had a number of titles of original IP. And when someone emailed about those titles, it went to sort of a hodgepodge distribution list of directors and producers. And maybe…our QA department. And it was like, ‘Is someone going to get the door? Like, who who’s going to answer this one? I’m not entirely sure. This person’s on this project now...’ [and] that can lend itself to things falling through the cracks.”

Hall recognized a need to fill these gaps.

“This position has given me an opportunity to grow something from the ground up” and address those kinds of leaky floorboards, she explained to Posner.

“Now we have that all streamlined,” Hall added. “Then along came moderation when we created Among Us 3D. We started adding, like, ‘How do we keep our community safe as well and making sure that we’re supporting them in that regard?’ I look forward to continuing to learn about everything from trust and safety to responsible ways to leverage AI so that, maybe, we can get back to people even faster or with even better information.”

“How can we maybe integrate some of these features within our games? Can we have better player support options if you’re in a VR headset and you’re having an issue or a question? Can we get back to you much faster without you even having to take the headset off?”

“With every step we make,” Hall said, the key question must remain, “How is this best serving the player?”

Something Hall didn’t foresee but that has surfaced during her journey involves reclaimed language, or reappropriated language.

“When we started moderating” what players were saying in Among Us 3D, Hall explained, “we took the stance, which I still don’t think was unreasonable at the time, to say like, ‘Racial slurs are racial slurs.’”

That may sound fine philosophically, she maintained, but when she and others went into the moderation software and heard “what people are saying in-game, and hearing some high five moments, some really good moments, where someone is using reclaimed language, trying to take back a position of power, [there’s] a very complicated sociological aspect” to what Schell Games should do in that instance, if anything.

“That’s not something that we considered right out of the gate,” Hall recalled.

“We don’t want to disempower people who are trying to leverage that very nuanced situation. Talking to people internally, changing our perspective on the way that we were doing moderation, I think that was a big win and something that…we’ve continued to try to evolve as we go.”

According to Hall, player support can become “really complicated when you’re talking about something like voice chat, and you don’t know whether or not the person who’s using this phrase in a short snippet of audio is a member of that group…Are they using it satirically? Are they using it to hurt someone who may have said that earlier in the in the match?”

Posner asked Hall if she’s racked up some other wins in 2025.

In terms of Among Us 3D, Hall responded, “we just launched the private lobby mode, unmoderated private lobbies…”

“The muting system, which happened earlier this year, a few months back, that is another big one,” she said. The latter option lets players suppress incoming chat coming from specific players.

“We first launched the game and we started moderation in 2023. This was a brand new thing for us. We kind of took a hard line on things. And then we said, ‘You know what, that was a little unfair. Let’s modify our practices. Let’s continue to evolve these guidelines...’”

“It will continue to be an evolution and an iterative process,” Hall stressed to Posner. “When I look back, especially in the last year with those two big [changes], auto mute and unmoderated private lobby, I feel really good that we were able to take player feedback and do something about it. That, ultimately, objectively makes the game more welcoming.”

Unmoderated private lobbies and reclaimed language are also connected. Schell, Hall elaborated, “heard a lot of feedback from players who said, ‘Hey, I’m making private lobbies and you’re moderating me in my private lobbies. It’s me and my friends playing in a living room…This is not a space that I want you guys listening. We are vulgar in my house. If this is truly a private lobby, butt out with the moderation.’ And we thought, ‘You know what, that’s fair.’”

Hall and some engineers are still testing “the efficacy of that, but we have heard some very positive feedback from players. And there’s a lot of guardrails on that system as well. If you’re playing with the youth account, you can’t join an unmoderated lobby. If you are joining an unmoderated lobby, the room code is very obvious. It starts with an ‘X’ to let you know, “Hey, this is not moderated.’”

“We want you to be aware, like, ‘We aren’t here to try and make sure that everything is adhering to the Code of Conduct as we would in a moderated lobby.’ That’s just another example of the ways that we’ve been listening to the community and trying to make sure that those concerns are addressed.”

The tight collaboration between Innersloth and Schell games around Among Us has resulted in a joint Code of Conduct that’s on Innersloth’s website.

Most social video games have a public-facing Code of Conduct—which is distinct from a EULA—that users need to follow if they want to stay out of hot water. These rules lay out what players are allowed to do and what they can’t do without risking consequences and, similar to chat moderation in multiplayer games and leak-free email-based customer service pipelines, they’re a building block that’s part of an effective player support framework.

These frameworks appear to exist across the gamut of video game publishers today. Microsoft’s Xbox platform, to take a much, much larger example, has the Xbox Community Standards. Like Schell’s and Innersloth’s Code of Conduct, these Xbox standards “provide a comprehensive framework for maintaining a positive and inclusive gaming environment. It sets clear expectations for player behavior, emphasizing the importance of respect, fairness, and safety. These standards include guidelines on keeping content appropriate, avoiding harmful behavior, respecting others’ rights, and maintaining privacy. They also highlight the importance of contributing positively to the community...”

Such standards are part of a set of interrelated components that comprise broader player community trust and safety systems.

Microsoft’s latest Xbox Transparency Report, which was published in February, notes what its other building blocks are:

Player controls that allow customization of a range of settings across all platforms

Proactive tools/tech that can identify and remove inappropriate content that violates platform standards

Player reporting tools and backend tracking and adjudication systems

An appeals process for players who’ve been found in violation community standards and

Ongoing learning and investments so the entire system (hopefully) improves over time.

They may be much, much smaller in scale and perhaps less sophisticated, but Hall’s and Posner’s exchange implied that most, if not all, of these building blocks exist in Schell’s (and probably Innersloth’s) player support frameworks.

On top of this, we might sprinkle a common “safety by design” mindset. The aforementioned Xbox Transparency Report stated that their “approach to safety-by-design and a dedicated team ensures safety is, and will always be, a priority for everyone…” (A Player Driven guest that’s deeply enmeshed in player community trust and safety matters, Netflix Games’ Christina Camilleri, also highlighted her commitment to this mindset in a separate blog from January.)

One last point about these frameworks may be worth highlighting before moving on: they’re inherently fuzzy and complex. As Hall’s reclaimed language example illustrated, whenever one’s managing the interactions of live human beings at scale, opportunities for messy and grey areas abound.

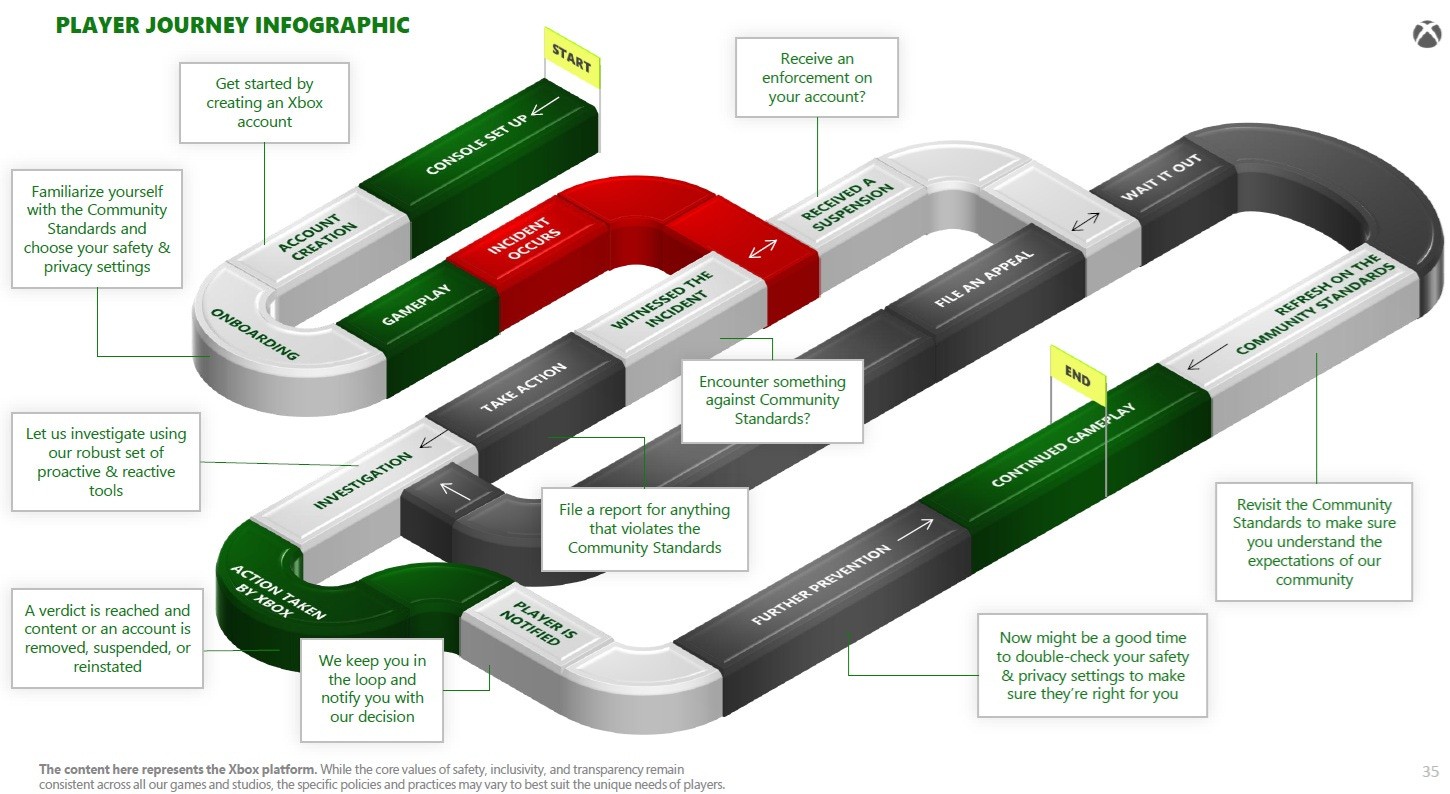

Per the Xbox Transparency Report, if a player drifts into potentially hot water—see the red “incident occurs” link in the player support pipeline below—a multistep process is kicked off. Several steps later, players are either suspended/punished or a cleared of wrongdoing.

Everything below the red workflow link can get messy, Hall’s example implied, especially in light of the fact that no company wants to antagonize paying customers. They’re going to want to shade on the side of “innocent until proven guilty.” These manual, custom processes don’t simply involve judgement calls, they’re also inherently expensive to run at scale. Both these considerations are worth bearing in mind once we get to Hall’s AI-centric insights.

By the way, it’s interesting there’s a player journey “Start” gate in the figure above but no offramp…just “continued gameplay.” I see what you’re aiming for, Xbox!

Player Reporting Systems and Added Protections for Kids

Sometimes reporting systems can be weaponized against other players. We want to be cognizant of making sure we make time to look at an issue from all perspectives. We don’t want to assume that someone is absolutely right with what they’re saying...but we also want to make sure that we are looking into any reports that we’re getting of toxicity or hacking or cheating.—Laura Hall

In their December conversation, Posner and Hall dug into how player support processes are distinct across two of Schell’s larger franchises, I Expect You to Die and Among Us.

Hall noted that I Expect You to Die debuted in 2016. She said that VR title has attracted “a very different audience than what we see from Among Us 3D.” The latter franchise generates more correspondence and is “a live online multiplayer game versus the I Expect You Die experience, where you’re in the headset [but] it’s not multiplayer, you’re kind of on this journey as a spy, by yourself.”

From I Expect You Die players, Hall continued, Schell more often gets “design questions like, ‘Hey, I’m really having trouble beating this level. What am I doing wrong?’ We can say, ‘Oh, they missed that little shard of glass that you need...’”

That’s unlike the “the Among Us 3D crowd, who’s saying, like, they had an issue with another player...We’re doing updates on Among Us 3D all the time, so there are new content questions, Live Ops type of questions” that come up too. (Note: Player Driven published a fairly deep live about Live Ops games processes recently, with a big assist from ArenaNet’s Crystin Cox.)

Posner asked Hall about the tools Schell uses to handle customer/player support and how they make “it easier and accessible for players to easily reach out” if they encounter a problem.

Hall didn’t specify a vendor’s tools but she noted some newer support directions Schell has been weighing. They’ve had numerous internal discussions, for example, about whether there’s “a way, especially for VR players, to submit a ticket to us from within their headset. If players are having a problem with another player, can they hit a report button that automatically, on the back end, generates a lot of information for our moderation team, [and] gives a timestamp?”

“A lot of times,” Hall elaborated, “we’ll have someone email us afterwards, ‘There was a player that I didn’t particularly care for. Here’s their display name.’ And we go look it up and 20 people have some variant of that display name. We can’t act on a report unless there’s evidence of a problem.”

Making Among Us 3D players switch platforms to report an issue with a fellow player is certainly less than ideal, especially because details tend to get fuzzier as time elapses between an alleged incident and its reporting—and we can’t forget some players indeed weaponize flagging systems against innocent parties (an issue of which Netflix’s Camilleri is also aware).

Rolling out more user-friendly flagging systems inside a specific game is something Xbox has also done. Its latest Transparency Report, which we noted in the preceding section, also stated that “Turn 10 expanded their reporting capabilities around user generated content in Forza Horizon 5 and implemented an easier way for players to report other players for cheating and unsportsmanlike conduct...This resulted in a more efficient player reporting process, enabling Turn 10 to take action on a higher number of escalations in 2025.”

This suggests that many of today’s Live Ops games have deployed or are looking at deploying easy to use in-game reporting systems that capture enough detail to make player/code violation judgement calls.

Posner pivoted and asked about systems that are designed to protect minors. From a legal angle, these can vary substantively by country and jurisdiction.

The Xbox Transparency Report, for example, noted that a new 18+ age verification system was rolled out in 2025 as “part of our compliance program for the UK Online Safety Act.”

Xbox also forged more partnerships last year to allow the platform “to more efficiently track down and disrupt efforts from bad actors around child sexual abuse and exploitation. In 2025, Xbox joined the Tech Coalition and its Lantern program so we can pool intelligence and securely share signals with our industry peers that strengthen our ability to detect high-risk behaviors.”

In this sense, player community trust and safety frameworks aren’t simply deployed by studios and publishers, they’re becoming interconnected, in some respects, based on industrywide standards and shared best practice investments.

Posner noted Roblox rolled out a 13+ age verification system for unfiltered text chat use in the U.S. in January (related systems are being deployed worldwide). Posner expressed comfort with “giving up a little bit of privacy to make sure that it’s safe for my child.”

Hall nodded.

“My eight-year-old is entering into that space,” she said.

One of the nice things with Among Us 3D, [is] we have the option, for parents, if you enter your birth date and it indicates you are below the age of consent, you are required to have a parent okay all of that. And as part of that verification process, the parent can say, “You can play this game, but you can't use voice chat,” or…you have the ability to granularly decide what functionality your child has access to. For example, display names are disabled. If I'm the pink bean, it’ll just say “pink.”

Such investments should be understood, at least partly, as risk mitigation tools. The scores of individual U.S. lawsuits that Roblox Corp. was facing in 2025—most of which involved allegations of failing to protect minors from harm—were recently consolidated into a federal multidistrict litigation (MDL) in the Northern District of California. If you’re offering Live Ops games, especially if minors can access them, you’re running a legal, PR, ethical, and financial risk if you don’t have a quality age verification systems in place.

For what it’s worth, the Xbox Transparency Report stated the platform received 39 million reports/flags from players globally last year. 9.6% of them—some 3.7 million reports—resulted in “restrictions imposed on content and accounts.” The top two buckets of actions were taken against spammers (1.7 million) and users of profanity/vulgarity (560,000).

If a tiny fraction of such reports fell into a fuzzy/grey area that required custom, manual investigations, the aggregate resources needed to resolve those reports quickly and effectively (refer back to the Player Journey Infographic in the preceding section for more color) must be equally staggering by default.

Which brings us to AI.

Why AI Tools Are Making Rapid CX/Support Inroads

On the backend we use, for moderation purposes, a tool called ToxMod that automatically processes all the voice chat that happens within the game, and identifies toxicity as well. We ran the numbers once, and we figured out, based on the number of average active users, we’d have to have over 300 moderators in the game listening to it live to do it without the help of this tool.—Laura Hall

During their December exchange, Hall told Posner that Schell used several tools “that generate reports for our team to take a look at.”

A tool that Hall did specify was Modulate’s ToxMod, which game studios and other social platforms use to understand what’s happening in their digital voice channels. Billed as the “#1 AI model for understanding voice conversations,” ToxMod analyzes incoming voice traffic in real-time and helps companies mitigate any harassment, hate, abusive/threatening, and otherwise toxic behaviors detected in their Live Ops-related systems.

Hall said that ToxMod “helps us to sift out what we believe to be our most toxic moments in the game [Among Us 3D] and issue either mutes or bans very quickly based on that evidence…We do use a lot of tools like that that that help us to get the job done.”

Posner asked Hall to double-click on Schell’s used ToxMod.

Hall turned back the clock to the game’s launch window.

‘When Among Us [VR] initially launched it did not have moderation in it,” Hall explained. “We worked very hard to quickly retrofit a system that could hook into the game, and work with ToxMod. So ToxMod does all of the listening, if you will, and the identifying of any toxic moments. And it escalates any potentially problematic moments to our proprietary moderation system, which we’ve called Airlock Admin.”

How apropos. Whether or not these moderators wear dayglo jumpsuits, Hall didn’t say.

“From there, our moderators can review clips and identify where there might be potential toxicity. And for a long while, we were only able to dole out bans. We have since heard…from players, ‘Hey, I’m banned and…this feels a little unfair. I paid for this game. I’d like to be playing it.’”

Schell, which had been handing out one- to seven-day bans, responded by reducing “the ban length, and then we were able to work in a system of muting as well, which is a lot less punitive than being banned. You can keep playing, you just don’t have your microphone.”

When ToxMod was initially implemented, according to Hall, Schell gathered several month’s worth of voice data to determine a baseline level of “toxicity in the game. That was our biggest complaint with the game: There are other toxic players. ‘I’m being harassed. I would love to play this game, but it’s a little bit of a rough environment.’ The game launched in November 2022. We added moderation in May” of the following year.

We saw a dramatic reduction in toxicity. When we first started moderating, we were seeing around 8% of players getting bans in the first couple weeks. That dropped to 2% after about a month of moderation. Then it did, gradually, kind of go back up. It’s generally [since been] between two and five percent.

Modulate does “a great job of continuing to evolve their product to better support the community, and making sure that we are identifying toxicity and toxicity only,” said Hall.

“I think we’ll just continue to listen and see what’s working and improve what isn’t.”

Makes sense.

Hall’s comments implied ToxMod helped reduce the amount of toxicity experienced by Among Us 3D players by half, roughly speaking, relative to the game’s 4Q22-1Q23 pre-implementation baseline.

It’s not just that: the AI tool has also vastly reduced the manual (human) work required to manage all of the game’s voice traffic, and helps rationalize and streamline the process of muting and banning players as appropriate.

Microsoft’s Xbox Transparency Report threw the use of AI in a player trust and safety context into even starker relief. Across all of Xbox’s communication channels last year, the report showed that 14.8 billion pieces of user-generated content were “proactively scanned” globally. About 2.5% of that content “was identified as containing harmful or policy-violating content.” The vast majority of that content was scanned by AI tools that leveraged machine learning to identify the offending content.

The bottom line, according to Xbox, was that “363M restrictions were imposed on content and 16M restrictions were imposed on accounts as a result of proactive detection.” The leading specific bucket of infractions, at 150 million, was “Abuse of our Platform and Services,” which means something the user posted (text, username, images) was found to violate community standards. The second largest bucket, at 62 million, was “Profanity and Vulgarity,” which we assume mostly referred to voice chat infractions.

Microsoft was quite clear that AI-based tools are core building blocks of Xbox’s trust and safety processes. Xbox believes “automation and the use of AI-enabled solutions, combined with human expertise, play crucial and complementary roles in effectively identifying, reporting, and preventing harms at scale…and help focus human moderation efforts on more nuanced and complex issues.”

Those are the grey nooks and crannies.

Let’s be clear, though: the overwhelming majority of trust and safety-related work is already being done by AI-powered tools, presumably because (1) they work pretty well and are getting better, and faster, over time and (2) they’re immensely more cost-effective than manual/human alternative. Trust and safety’s rubber meets the proverbial road at that intersection today and, at the risk of torturing the metaphor, that genie isn’t going back in the bottle. Xbox’s report ultimately showed that close to 97% of all reports were processed using automated systems last year. Xbox undoubtedly aims to drive that share higher this year.

We assume this vision is common across most studios and publishers that are running Live Ops-oriented games, and Schell Games’ Laura Hall didn’t dissuade us from reaching this conclusion.

“I think we are at a very interesting nexus in history with so many new AI tools,” Hall told Posner toward the end of their discussion. “Now you can plug a concept into [Google’s] NotebookLM... It’s generating so many new resources.”

Posner agreed that NotebookLM “one of the greatest things out there.”

“I’m asking it to quiz me on things,” Hall responded. “Now I’ve really, really committed some of this stuff into my own mind. And I can think about that in a much more dynamic way and in an incredibly blisteringly fast way.”

“I remember reading a book during grad school that talked about the definition of creativity being the intersection of existing ideas and existence. Like, everything that we’ve explored before, just finding new intersections for those things. And I think AI is able to help us do that really, really quickly when used responsibly.”

Agreed—and the word responsibly carriers a ton of weight there.